Microsoft: Hackers abusing AI at every stage of cyberattacks

Microsoft says threat actors are increasingly using artificial intelligence in their operations to accelerate attacks, scale malicious activity, and lower technical barriers across all aspects of a cyberattack.

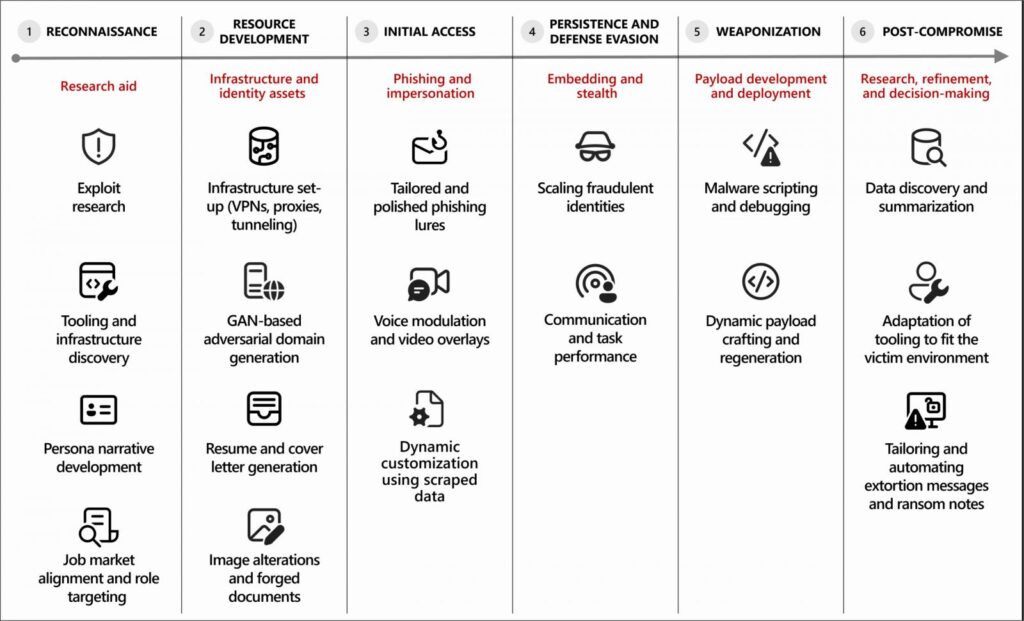

According to a new Microsoft Threat Intelligence report, attackers are using generative AI tools for a wide range of tasks, including reconnaissance, phishing, infrastructure development, malware creation, and post-compromise activity.

In many cases, AI is used to draft phishing emails, translate content, summarize stolen data, debug malware, and assist with scripting or infrastructure configuration.

“Microsoft Threat Intelligence has observed that most malicious use of AI today centers on using language models for producing text, code, or media. Threat actors use generative AI to draft phishing lures, translate content, summarize stolen data, generate or debug malware, and scaffold scripts or infrastructure,” warns Microsoft.

“For these uses, AI functions as a force multiplier that reduces technical friction and accelerates execution, while human operators retain control over objectives, targeting, and deployment decisions.”

AI used to power cyberattacks

Microsoft has observed multiple threat groups incorporating AI into their cyberattacks, including North Korean actors tracked as Jasper Sleet (Storm-0287) and Coral Sleet (Storm-1877), who use the technology as part of remote IT worker schemes.

In these operations, AI tools help generate realistic identities, resumes, and communications to gain employment at Western companies and maintain access once hired.

Jasper Sleet leverages generative AI platforms to streamline the development of fraudulent digital personas. For example, Jasper Sleet actors have prompted AI platforms to generate culturally appropriate name lists and email address formats to match specific identity profiles. For example, threat actors might use the following types of prompts to leverage AI in this scenario:

Example prompt 1: “Create a list of 100 Greek names.”

Example prompt 2: “Create a list of email address formats using the name Jane Doe.“

Jasper Sleet also uses generative AI to review job postings for software development and IT-related roles on professional platforms, prompting the tools to extract and summarize required skills. These outputs are then used to tailor fake identities to specific roles.

❖ Microsoft Threat Intelligence

The report also describes how AI is being used to assist with malware development and infrastructure creation, with threat actors using AI coding tools to generate and refine malicious code, troubleshoot errors, or port malware components to different programming languages.

Some malware experiments show signs of AI-enabled malware that dynamically generate scripts or modify behavior at runtime.

Microsoft also observed Coral Sleet using AI to quickly generate fake company sites, provision infrastructure, and test and troubleshoot their deployments.

When AI safeguards attempt to prevent the use of AI in these tasks, Microsoft says threat actors are using jailbreaking techniques to trick LLMs into generating malicious code or content.

In addition to generative AI use, Microsoft researchers have begun to see threat actors experiment with agentic AI to perform tasks autonomously and adapt to results.

However, Microsoft says AI is currently used primarily for decision-making rather than for autonomous attacks.

Because many IT worker campaigns rely on the abuse of legitimate access, Microsoft advises organizations to treat these schemes and similar activity as insider risks.

Furthermore, as these AI-powered attacks mirror conventional cyberattacks, defenders should focus on detecting abnormal credential use, hardening identity systems against phishing, and securing AI systems that may become targets in future attacks.

Microsoft is not alone in seeing threat actors increasingly using artificial intelligence to power attacks and lower barriers to entry.

Google recently reported that threat actors are abusing Gemini AI across all stages of cyberattacks, mirroring what Amazon observed in this campaign.

Amazon and the Cyber and Ramen security blog also recently reported on a threat actor using multiple generative AI services as part of a campaign that breached more than 600 FortiGate firewalls.